To create the decision tree, I used the DecisionTreeClassifier within the scikit-learn package (refer to the documentation for further information). Wine_data = pd.read_csv( '', names = wine_names)Īfter importing the pandas library and the wine dataset, I converted the dataset to a pandas DataFrame. 'Flavanoids', 'Nonflavanoid phenols', 'Proanthocyanins', 'Color intensity', 'Hue', 'OD280/OD315',\

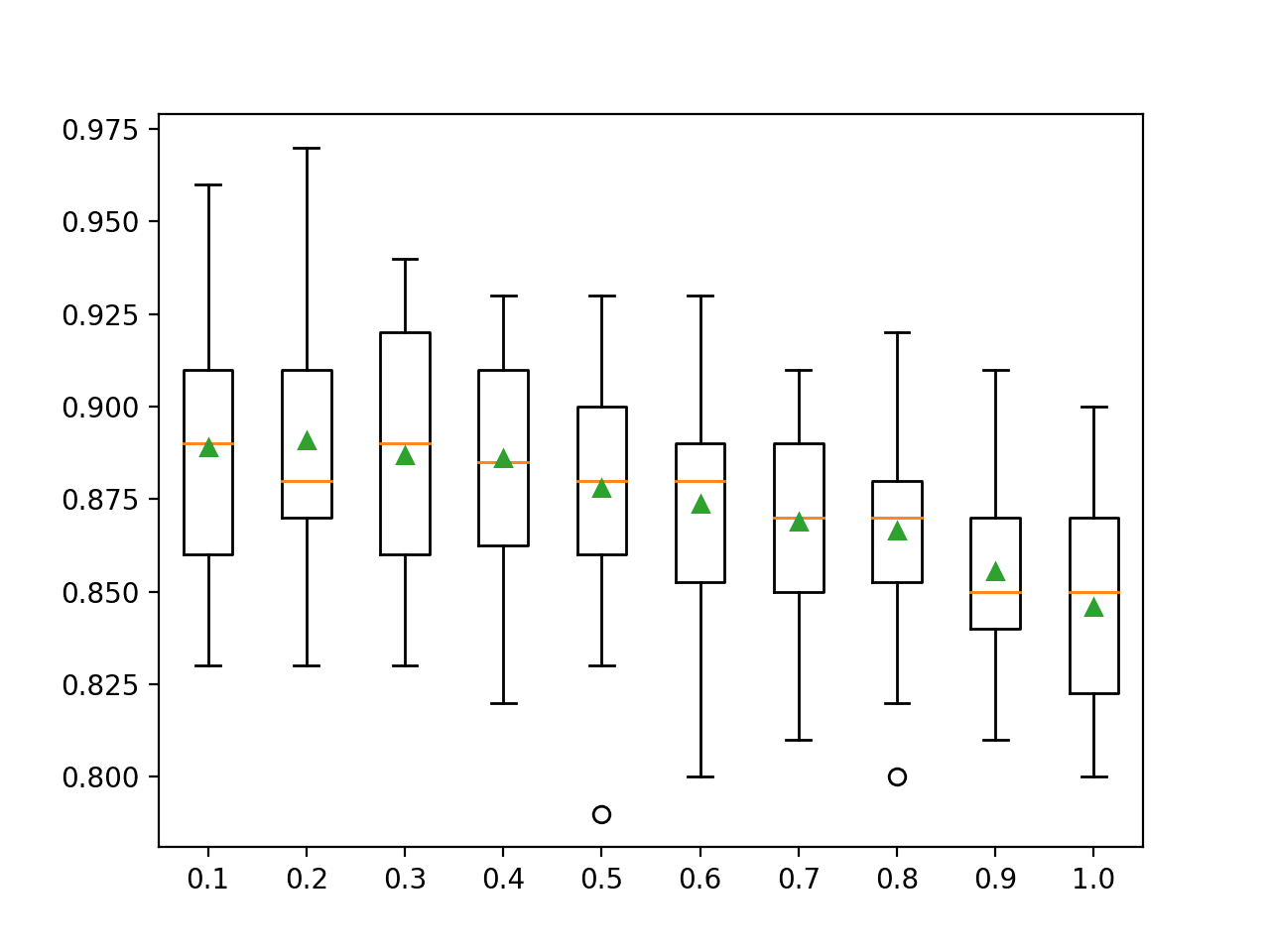

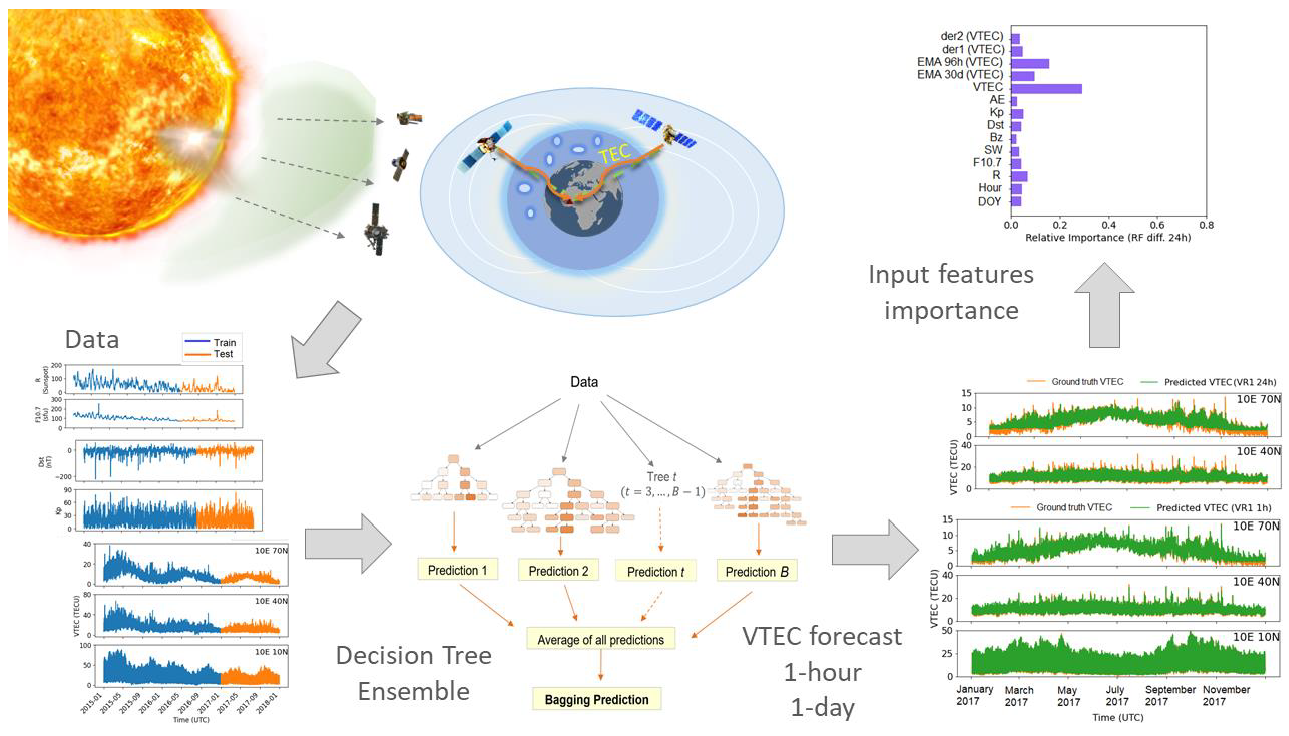

Wine_names = ['Class', 'Alcohol', 'Malic acid', 'Ash', 'Alcalinity of ash', 'Magnesium', 'Total phenols', \ Each observation is from one of three cultivars (represented as the ‘Class’ feature), with 13 constituent features that are the result of a chemical analysis. The wine dataset provides 178 clean observations of wine grown in the same region in Italy. The data we’ll use to build our model is from the UCI Machine Learning Repository. Installation instructions can be found here. To implement the algorithm, I’ll be using the free community edition of ActivePython 3.6. Commonly known as “bagging,” his technique creates an additional number of training sets by sampling uniformly and with replacement from the original training set. Yet another strategy to improve decision tree models was developed by Leo Breiman in this paper.In the case of regression trees, the loss function weights each leaf within the tree differently, such that leaves that predict well are rewarded and those that do not are punished. Several years later, Jerome Friedman published a paper outlining another strategy for improving any predictive algorithm that he called generalized gradient “boosting,” which involves minimizing an arbitrary loss function.His paper elucidated an algorithm to improve the predictive power of decision trees: grow an ensemble of decision trees on the same dataset, and use them all to predict the target variable. Tin Kam Ho first introduced random forest predictors in the mid-1990s.Coupled with ensemble methods that data scientists have developed, like boosting and bagging, they can achieve surprisingly high predictability.Ī brief history may help with understanding how boosting and bagging came about: Without any fine tuning of the algorithm, decision trees produce moderately successful results. This elegant simplicity does not limit the powerful predictive ability of models based on decision trees.

Figure 1: Decision treeĪs you can see, the tree is a simple and easy way to visualize the results of an algorithm, and understand how decisions are made. In our case, the features are Alcohol and OD280/OD315, and the target variables are the Class of each observation (0,1 or 2). Each branch (i.e., the vertical lines in figure 1 below) corresponds to a feature, and each leaf represents a target variable. At the same time, they offer significant versatility: they can be used for building both classification and regression predictive models.ĭecision tree algorithms work by constructing a “tree.” In this case, based on an Italian wine dataset, the tree is being used to classify different wines based on alcohol content (e.g., greater or less than 12.9%) and degree of dilution (e.g., an OD280/OD315 value greater or less than 2.1). One of the advantages of the decision trees over other machine learning algorithms is how easy they make it to visualize data. The code that I use in this article can be found here.ĭecision tree learning is a common type of machine learning algorithm. I’ll also demonstrate how to create a decision tree in Python using ActivePython by ActiveState, and compare two ensemble techniques, Random Forest bagging and extreme gradient boosting, that are based on decision tree learning. In this post I’ll take a look at how they each work, compare their features and discuss which use cases are best suited to each decision tree algorithm implementation. Random Forest and XGBoost are two popular decision tree algorithms for machine learning.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed